“It’s an energy field created by all living things. It surrounds us and penetrates us; it binds the galaxy together.”

This Obi-Wan Kenobi quote dates back to a long time ago in a galaxy far, far away but also fits current AI, which is present in many elements of our daily lives. And regarding the HR sphere, the presence and benefits of artificial intelligence in recruitment and talent management are no exception. Many organizations have found in AI a time-effective tool with multiple perks that allow both recruiters and talent managers to effectively perform their tasks assisted by algorithm-based suggestions.

But with great power comes great responsibility. The toughest challenges to overcome with artificial intelligence are closely related to concerns around how much we should let it affect our decisions and how we can limit it to fit organizational goals and needs.

Dimitri Boylan, CEO of Avature, Rabih Zbib, Avature’s AI expert, and David Wilson, CEO of Fosway, got together to discuss the ethical issues of artificial intelligence in recruitment and talent management and Avature’s approach to them. As a plus, each of them shared their insight into the future development of artificial intelligence in the HR industry.

The Evolution of AI

In a nutshell, artificial intelligence is “the human creation of a scientific subset that focuses on non-human intelligence.” In everyday English, it involves the simulation of human intelligence processes by machines, especially computer systems. Surprisingly, we can link this technological development to theories that date hundreds of years back in time, long before it could automatically filter and make new hire suggestions for a specific job opening.

But what matters to us the most here is the idea of machine learning, which made its first appearance in Alan Turing’s test in the 1950s, where he questioned the ability of a computer to think like a human.

The idea of creating a machine that could acquire new knowledge and apply it to a specific field gave place to the role of artificial intelligence in recruitment and various other HR areas. Sourcing and recruiting employees or their further development as part of the organization are key elements in the approaches to artificial intelligence in the human resources sphere. However, its evolution has also fuelled the need to develop best practices and implement them to comply with organizational core values.

“Organizations need to understand how they can build and apply this technology in a meaningful way.”

David Wilson

CEO of Fosway

What Can, Can’t and Should AI Do?

If allowed, the reach of the different approaches to artificial intelligence can become limitless. When it comes to everyday tasks, it can go from suggesting the best route to avoid real-time traffic and get to your destination promptly to identifying obstacles and automatically valet parking your car. The latter is one of the latest applications of artificial intelligence that actually replaces human intervention and acts completely on its own. But this is not the only case where AI has replaced human labor. Automated tolls, traffic tickets and even our morning coffee are in the hands of AI.

However, in this futuristic approach worthy of a Jetsons episode, we also understand that, in some instances, AI calls for human intervention. This involves not only its creation and development but also setting its boundaries. This is where the ethical issues of artificial intelligence play a crucial role. To what extent should we let AI do things, and what are the best ways to set the limits? With these concerns in mind, at Avature we decided to build our artificial intelligence in-house to assist our solutions.

Artificial Intelligence Best Practices: Avature’s Approach to AI

In the context of talent, the main goals of artificial intelligence are to improve hiring and talent management outcomes, improve the user experience and drive efficiency in processes through machine learning and reasoning. These objectives are met through different AI-powered tools, such as semantic search, candidate shortlisting and personalized mobility recommendations that match various criteria adjusted to each of our customer’s needs.

But these were not the first or only AI-powered functionalities that AI experts at Avature have managed to develop internally. In the early days of developing a recruiting solution, parsing was one of the obstacles the team encountered for the technology to be truly effective. When investigating solutions already available in the market, they came across significant language limitations which triggered them to develop a powerful parser internally. This was the first iteration of Avature’s ethical and effective AI.

“A brain can’t work outside the body where the action takes place. AI is the brain, and the organizations that produce it are the body.”

Dimitri Boylan

CEO of Avature

Thanks to it being internally produced, the experts at Avature set the limits from scratch, preventing any of the ethical issues of artificial intelligence (e.g. DEI, compliance, bias) from creeping in. This way, Avature can ensure an enhanced user experience in every solution without jeopardizing any of these crucial elements. Here are examples of how Avature implements artificial intelligence in recruitment and talent management to deliver the best possible experience to all stakeholders.

Semantic Search

With AI-driven matching capabilities, recruiters can find qualified candidates for new roles, whether internally or externally sourced. AI-powered semantic search allows HR experts to run Google-like searches, where artificial intelligence understands what they are looking for delivering relevant results that go beyond keyword matching.

Using artificial intelligence to promote diversity and reduce bias, these skills-based recruiting campaigns consider what’s important to the role, regardless of other elements such as gender.

Candidate Recommendations

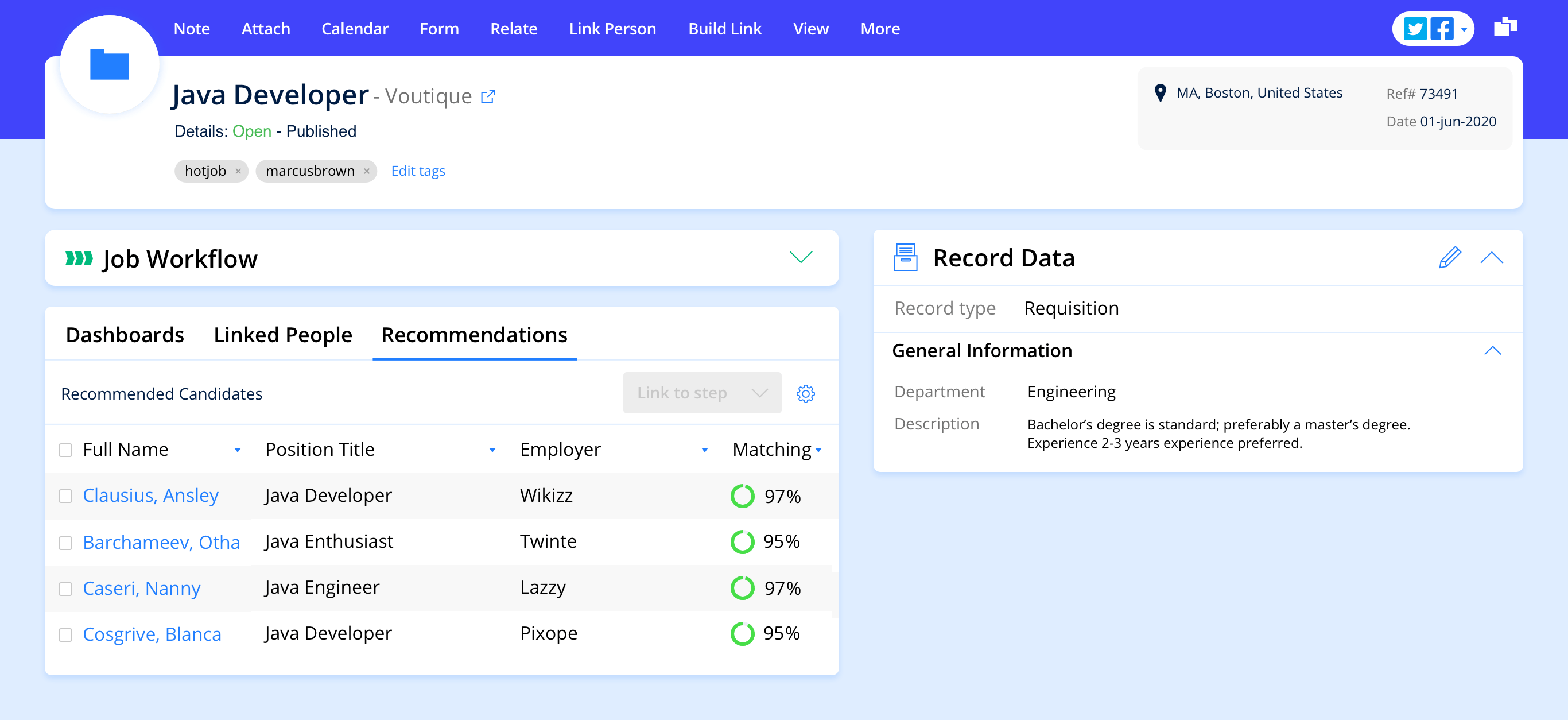

Candidate recommendations help recruiters conduct bias-free, skills-focused sourcing strategies. As an opportunity for a new role appears, users will find relevant candidates that the artificial intelligence uncovered from within the database.

Through effective candidate pipelining, recruiters visualize AI-powered lists of recommended candidates. With a white-box approach to artificial intelligence, these suggestions provide visibility into the criteria that triggered the algorithm. This way, users can assess them and have a human-based final word on sending an interview invitation or not.

“You let AI choose your next sneakers for you, but should you let it choose your next new hire?”

Rabih Zbib

Director of Natural Language Processing & Machine Learning at Avature

Personalized Mobility Recommendations

With internal mobility as one of the hottest strategies to improve employee engagement, organizations must find ways to provide the right talent with the right growth opportunities at the right time. AI-powered capabilities allow employers to evolve towards a skills-based approach, where they can provide employees with a broad range of mobility opportunities tailored to their personal interests as well as organizational needs and goals. These might be open roles, short-term projects, stretch assignments or any other opportunity the organization sees fit.

By implementing artificial intelligence best practices, the internal mobility process becomes a much more powerful one. It drives transparency, reduces bias and promotes professional development, giving employees good reasons to stay at the organization.

The Importance of Data and Data Management

One major truth about artificial intelligence is that it is as good as the data driving it. Thus, before even thinking about implementing AI in any process, one of the most critical elements is how clean and reliable the data about to feed it is. In this sense, the more extensive and accurate the data, the better the technology.

According to Rabih, one of Avature’s advantages of building its AI internally is that the same vendor that takes care of improving user experience through AI-powered functionalities is also in charge of collecting, organizing and managing the data. By using real data that spans multiple sectors and industries, the AI team knows exactly what information is leveraged and how each solution implements it.

A Glimpse Into The Future Development of Artificial Intelligence

As the meeting ended, the participants shared their insights into the future development of artificial intelligence. While most technological future insights tend to look for radical changes, such as flying cars and Rosie the Robot running house chores – well, we’re actually not that far from that, the future of AI doesn’t necessarily imply a huge, unique jaw-dropping innovation. Instead, as Dimitri points out, small details add up to a great outcome. And that’s the future of AI.

“You don’t have to take the moonshot with AI. Focus on little things and look for small adjustments and improvements. Eventually, it will look like a moonshot when you look back.”

Dimitri Boylan

CEO of Avature